If AI has you worried about your job, you’re not alone.

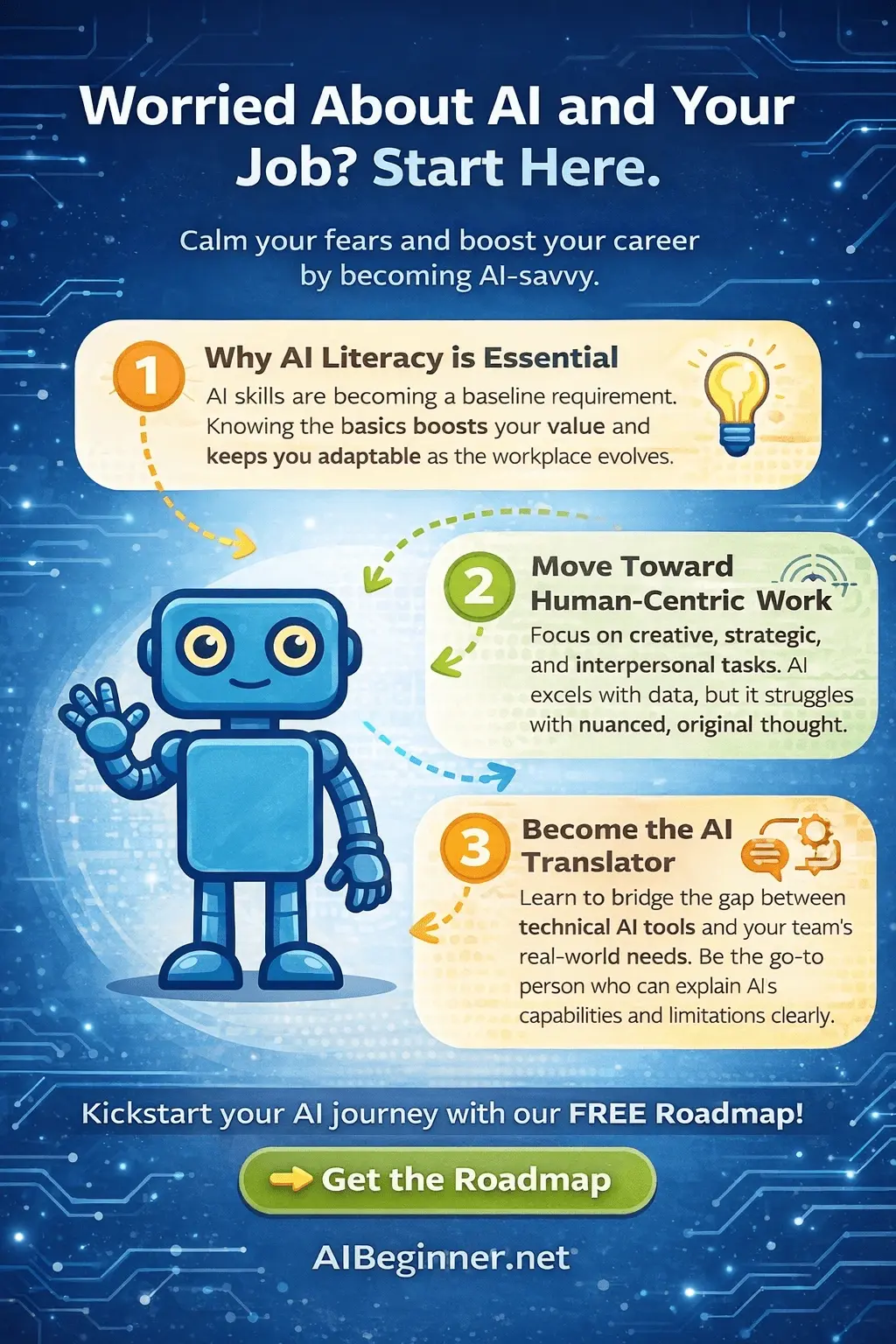

Quick takeaway: The safest move isn’t panic or ignoring it — it’s building AI literacy (baseline), shifting toward work AI struggles with, and becoming the person who can translate “AI talk” into real business outcomes.

Quick answer for professionals: If AI has you concerned about long-term job security, the better question is not “Will AI replace me?” but “How prepared is my organization to use AI responsibly?” That is exactly why we created the AI Readiness in the Workplace guide and the AI Readiness Score — to help professionals and leaders evaluate readiness before rushing into tools.

Why this topic matters right now

AI isn’t just a new app — it’s becoming a new layer of work. When tools can draft, summarize, analyze, and brainstorm in seconds, expectations change. That can feel unsettling… especially if you haven’t had a calm, practical starting point.

1) AI literacy is becoming a baseline skill

AI literacy doesn’t mean you need to code. It means you can:

- Use AI tools safely (and know what not to share)

- Give clear instructions and iterate (prompting + feedback loops)

- Evaluate outputs (spot errors, bias, missing context, and “confident nonsense”)

- Apply AI to real tasks (emails, summaries, planning, research, drafts)

Multiple major workforce reports now treat AI literacy and related skills as mainstream upskilling topics — not niche technical skills.

Calm truth: In many roles, the advantage won’t come from “being the best at AI.” It’ll come from being the person who can use AI responsibly and turn it into repeatable workflows.

What AI literacy looks like in a normal week

- Running meeting notes through an AI tool and producing a clear action list

- Drafting an email, then editing it with your own voice and context

- Turning a messy idea into a simple plan (steps, risks, owners, timeline)

- Explaining AI results to a teammate in plain English

2) Move toward the kinds of work AI struggles with

AI is strong at pattern-based drafting and summarizing. It struggles when the work requires human context, judgment, and responsibility.

Work AI struggles with (and why that matters)

- Owning decisions: AI can suggest — it can’t be accountable.

- Reading the room: nuance, timing, trust, and relationships.

- Defining the right problem: “What should we do?” beats “What can we write?”

- Edge cases: unusual scenarios, exceptions, constraints, trade-offs.

- Ethics + risk: safety, privacy, policy, compliance, and impact.

- Original strategy: choosing direction, prioritizing, and aligning people.

So the best “job protection” strategy usually looks like this:

- Let AI do the first draft work (speed)

- You do the decision work (judgment)

- Together you produce better output — faster (leverage)

Want a calm, step-by-step starting point?

If you want a structured way to build confidence without overwhelm, start the free 30-Day AI Roadmap:

3) Become the “AI translator” at work (and increase your influence)

Most teams don’t need an “AI expert.” They need someone who can translate:

- Leadership goals → practical use cases

- Tool capability → realistic expectations

- Promising outputs → verified, safe, usable deliverables

- Risk and policy → simple guardrails people actually follow

What an AI translator actually does

- Turns “We should use AI” into: one workflow, one team, one measurable outcome

- Creates prompt templates (with examples) so results are consistent

- Builds a lightweight process: draft → review → approve → share

- Helps others avoid common mistakes (oversharing data, trusting outputs blindly)

Influence multiplier: When you help others use AI safely and effectively, you become a connector — between people, processes, and outcomes. That role tends to grow (not shrink).

A simple 30-minute weekly routine to stay ahead

If you want to reduce anxiety and build real momentum, try this:

- 10 minutes: Test AI on one real task you already do

- 10 minutes: Turn the best result into a reusable template

- 10 minutes: Share one helpful example with a teammate

Small, consistent reps beat occasional deep dives.

What to avoid (the mistakes that create real risk)

- Copy/paste confidence: trusting outputs without verifying

- Over-sharing: uploading sensitive or personal data to unapproved tools

- Tool-hopping: chasing “the best model” instead of building habits

- Trying to learn everything: focus on one workflow first

Career resilience in the AI era: a practical checklist

If you are worried about AI and your job, use this quick checklist to identify the highest-value next steps.

Self-assessment checklist — check each item you can confidently say yes to.

Key takeaways

- AI literacy is becoming a baseline skill in many roles — and it’s learnable without coding.

- Your safest career move is shifting toward judgment, context, relationships, and accountability.

- Becoming the AI translator at work increases your influence by turning AI into trusted workflows.

- Calm consistency wins: one tool, one workflow, weekly reps.

FAQ

Do I need to learn coding to be “AI literate”?

No. For most people, AI literacy means using AI tools safely and effectively: giving clear instructions, checking results, and applying AI to real tasks.

What are “AI-proof” skills?

Skills that rely on human context and responsibility — decision-making, communication, relationship-building, leadership, ethics, and problem framing.

What is an “AI translator”?

Someone who can turn AI capabilities into practical workflows, explain results in plain English, set lightweight guardrails, and help a team adopt AI responsibly.

How do I start if I feel overwhelmed?

Pick one tool and one workflow. Practice for 10–15 minutes a few times per week. A simple plan beats endless research.

What’s the biggest risk when using AI at work?

Sharing sensitive data in unapproved tools — and trusting outputs without verification. Treat AI like an assistant, not an authority.

References & further reading

- World Economic Forum: Future of Jobs Report 2025

- OECD: Bridging the AI skills gap (AI literacy and training)

- LinkedIn: Work Change Report (AI literacy skills trends)

- Microsoft Work Trend Index (AI at work)

Want a calm, step-by-step starting point?

Start the free 30-Day AI Roadmap (beginner-friendly, no hype, no jargon):

Or explore the AI Beginner Academy for structured courses (individual and workplace-friendly options).